DevSecOps Essentials: Software Asset Management

TL;DR / Summary at the end of the post. The information shared in this series is the distilled knowledge gained from my experience building the DevSecOps program at Thermo Fisher Scientific - a Fortune 100 global laboratory sciences company.

Full Disclosure up-front: I am employed as a Code Scanning Architect at GitHub at the time of publishing this article.

A recap of the DevSecOps Essentials

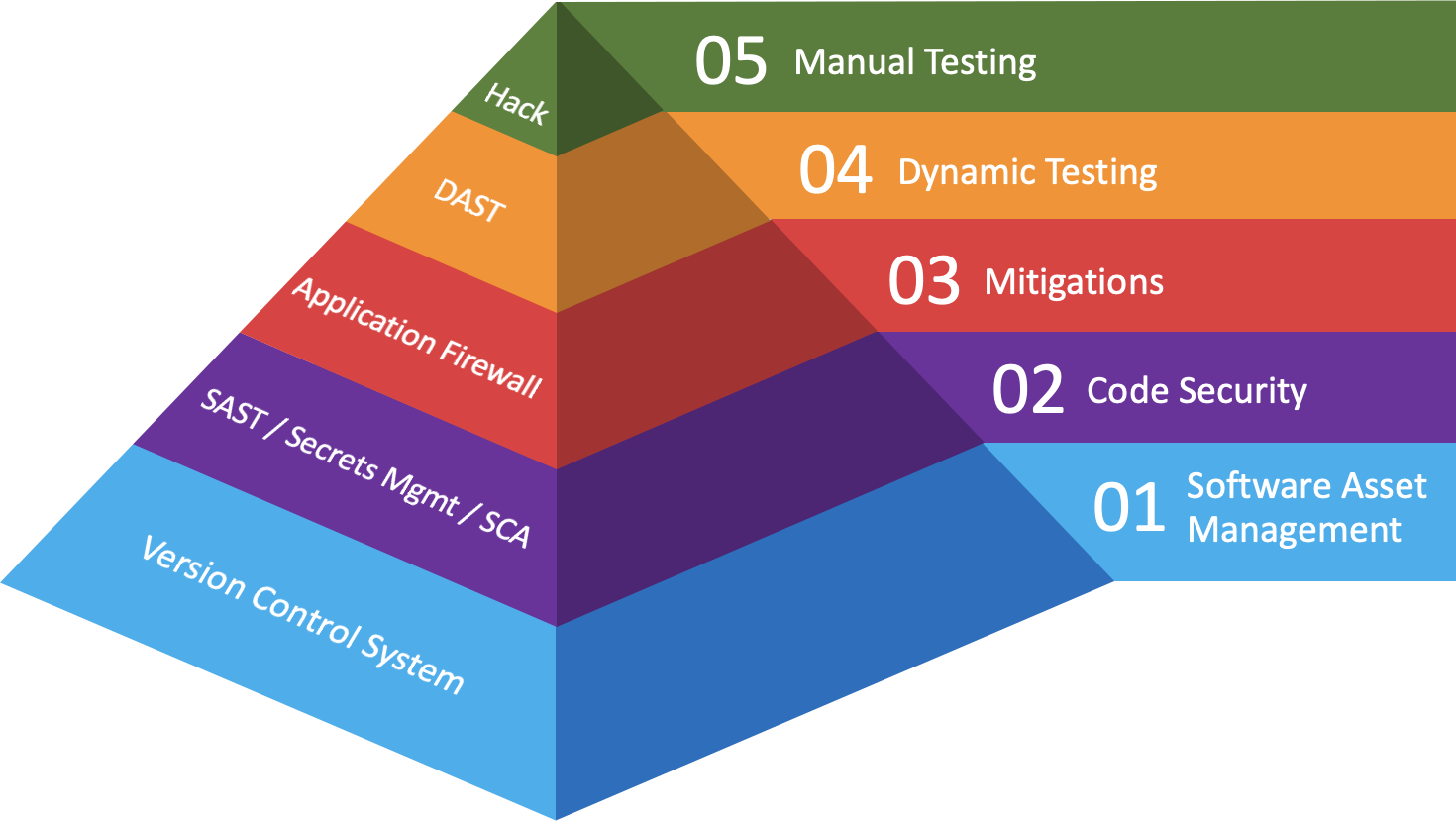

In the previous post in this series I provided an overview of what I consider to be the “DevSecOps Essentials”, in terms of both practices and their corresponding technologies. They say a picture is worth a thousand words, so the diagram below is how I categorized where companies should be making their investments to maximize the scale and impact of their DevSecOps program. In this post I’ll be talking about Layer 01 - Software Asset Management.

Background

In the Spring of 2019 I traveled to several Thermo Fisher Scientific manufacturing and software development offices across Europe. At the time, both the DevSecOps program and the overarching Product Security organization were still fairly new - so we were doing a global tour that year to spread awareness. My realization that the company needed a centralized version control system happened as a result of that trip.

We were at a site in Finland when it hit me. My colleagues and I were talking with a team of five developers working on device connectivity software for a benchtop instrument, and I asked them where they stored their source code. They pointed to a desktop system sitting in the corner of the lab running a free, git-based version control system. How on earth was I supposed to provide this team with application security tools? Was that box internet accessible? Oh 💩 - was that box internet accessible?! 😱

After my inward panic, we walked across the hall to meet with another team of five developers. They were working on device connectivity software for a different benchtop instrument with the intention of connecting it to the same cloud environment. So of course I asked them where they stored their source code, and they pointed to a desktop system sitting in the corner. Then I asked them if they knew the team from across the hall, to which they replied “No, sorry. We don’t work with them”.

So now I had to figure out how to provide both of these teams with application security tools. What’s more, I had to secure two different device connectivity applications used to connect to the same cloud environment! Doing a rough “back of the napkin” math, if each of these developers were making an average of $100k USD, the company was paying over $1M annually for effectively the same software!

Tackling the Version Control problem

Unfortunately, this sort of thing happens all the time in large companies. Thankfully security teams can use this inefficient allocation of resources to the company’s advantage. When it comes to centralizing on a corporate version control system, the pitch is all about “productivity”.

In my situation, if the back-of-the-napkin math was even close to correct, I could save the company close to $500k USD by consolidating development for just two device connectivity software teams. That year I learned of no less than seven teams working on such software, with an average of five developers per team. If I was able to consolidate down to just half the number of applications, the math quickly added up to millions in “productivity savings”.

Beyond that, when I began talking with larger organizations in the company about their business continuity and disaster recovery plans regarding their version control systems - I quickly learned that teams were dramatically unprepared for such events. Most of the system administration activities fell to a couple of developers within each organization, and things like patches or upgrades only happened during catastrophic failures (😥).

Moreover, all the costs I started adding up were above-and-beyond the licensing costs associated with the various version control software platforms the company was paying for. Since these teams didn’t interact with one another (they weren’t even practicing the first tenant of DevOps - communication!), they didn’t consolidate license procurement - and thus weren’t getting the benefit of collectively negotiating on contracts. Talk about leaving money on the table!

Anyway I think you get the point I’m trying to make here, which is this - there are a heck of a lot of costs associated with fractured version control systems in large companies. Take some time to do the math.

How to support this content: If you find this post useful, enjoyable, or influential (and have the coin to spare) - you can support the content I create via Patreon.️ Thank you to those who already support this blog! 😊 And now, back to that content you were enjoying! 🎉

The many benefits of a Corporate Version Control System

In order to tackle the growing hydra of device connectivity software applications at Thermo Fisher Scientific, I proposed centralizing teams on a corporate version control system. The concept of inner source is still fairly new to most companies, which is unfortunate because it offers a ton of opportunity for communication, coordination, and collaboration - i.e. the three tenants of DevOps, which many of these teams claimed to be practicing.

Likewise, by centralizing this service, we could relieve developers of their system administration responsibilities so that they could get back to what the company was paying them for - writing software for our customers. Heck, by using a scaleable cloud-based service for version control, could eliminate a lot of the system administration overhead.

Oh, and remember that earlier comment about business continuity and disaster recovery? Yeah, that was put to the test when a major data center outage occurred at the end of a quarter. Thankfully we had purchased a cloud-based version control system just beforehand, and many teams were able to migrate code with checkouts from unaffected continuous integration / continuous deployment (CI/CD) tools.

You can’t secure what you don’t know about

Finally, when you need to go about addressing security for the software the company is selling, the easiest way to do so is by plugging security into the corporate version control system. It bears repeating: you can’t secure the software you don’t know about, and teams can’t hide vulnerable code if they’re all using the same system.

If the software being secured lives in one place, you can likewise reduce the number of security tools in your pipeline while ramping up the scale and impact of the ones you continue using. This saves a lot of expense for companies in terms of security budget - which is a cost center on most balance sheets.

Thankfully it will also require fewer people to scale your security efforts when software is developed in one place. Security engineers are expensive and hard to hire / retain, so being able to manage (and document!) fewer security integrations in a single version control system allows companies to have a bigger impact per security individual.

TL;DR / Summary

By centralizing on a corporate version control system, companies reap extensive productivity and cost savings benefits. By placing developers in a shared system, you encourage communication, coordination, and collaboration (the three tenants of DevOps) - which in turn allows companies to reduce duplicate engineering efforts, leading to a more efficient allocation of resources.

Inner source is a term that is still relatively new to most organizations, and by embracing this practice companies can more efficiently develop new software for their customers. The only way to do this successfully is to consolidate on a corporate version control system that can support all of the company’s engineering teams.

Likewise, by leveraging a scaleable, cloud-based platform for version control, companies are able to reduce system administration overhead - as well as simplify business continuity and disaster recovery plans. Moreover, implementing security at scale becomes faster and simpler with limited (and often times expensive) security resources.

All that being said, consolidating won’t be easy - but the effort is more than worth it. Finding a cloud-based platform that can support the many needs of development teams is just the first step. Helping those teams migrate code, evolve their development practices, and plug-in security tooling will take some time. The end result is more productive software development - and more secure software.

And what company doesn’t want more productive software development that also leads to more secure software?

I think that’s as good a place as any to wrap-up this post on Software Asset Management. In my next post I’ll be talking about the security tools that you should consider plugging into your version control system - and what tooling may sound great, but can otherwise negatively impact all that productivity you just built for your development teams.

Thanks again for stopping by, and until next time remember to git commit && stay classy!

Cheers,

Keith // securingdev

If you found this post useful or interesting, I invite you to support my content through Patreon 😊 and thanks once again to those who already support this content!😊