DevSecOps Essentials: Mitigations

TL;DR / Summary at the end of the post. The information shared in this series is the distilled knowledge gained from my experience building the DevSecOps program at Thermo Fisher Scientific (a global Fortune 100 laboratory sciences company).

Full Disclosure up-front: I am employed as a Code Scanning Architect at GitHub at the time of publishing this blog post.

A recap of the DevSecOps Essentials

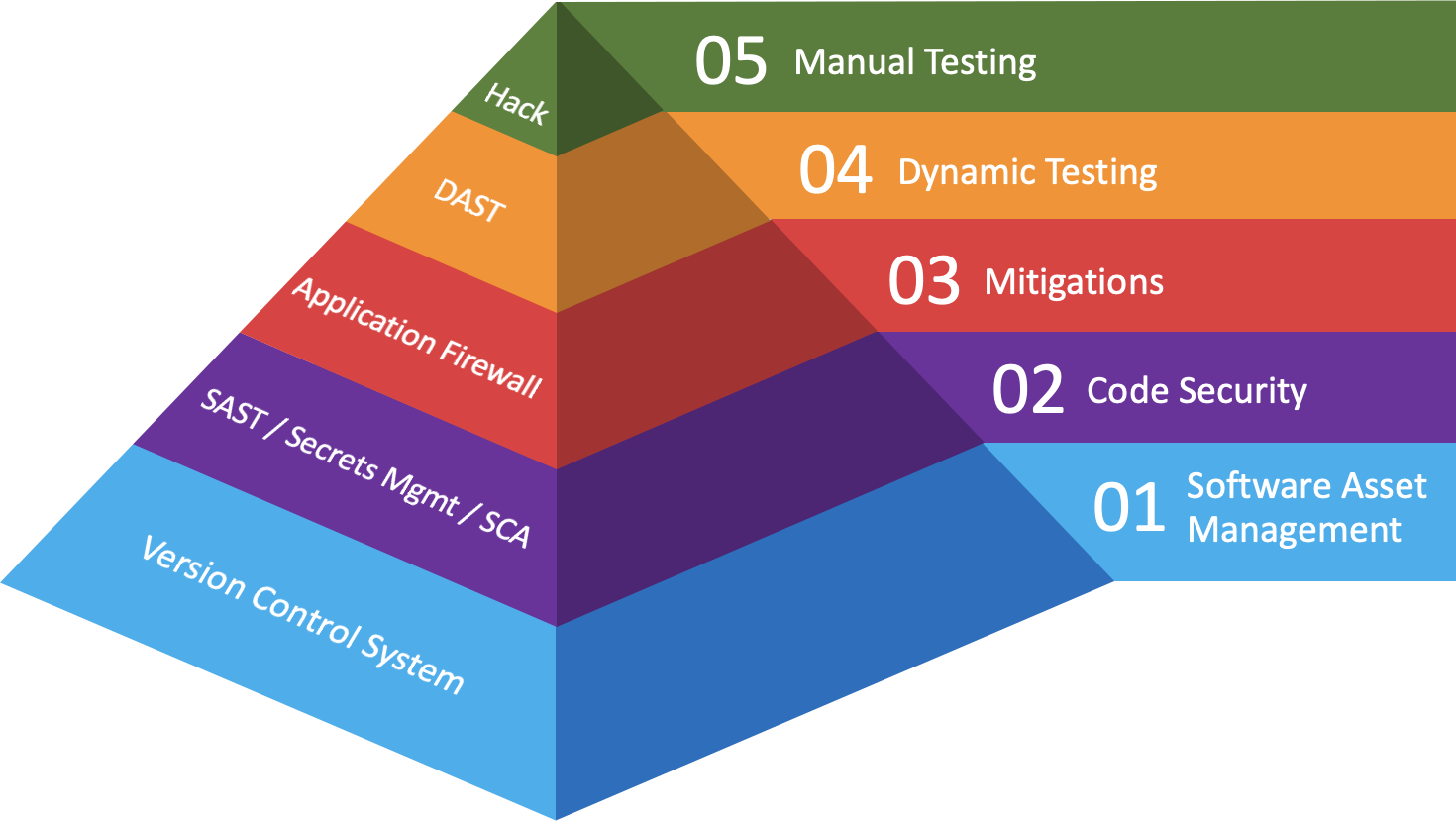

In the first post in this series I provided an overview of what I consider to be the “DevSecOps Essentials”, in terms of both practices and their corresponding technologies. If you’ve done everything you can to address Software Asset Management and Code Security at your company, then it’s time to dive into Layer 03 - Mitigations.

Your code is about to reach production - and if you haven’t made investments to remediate vulnerabilities by now, you’re going to have a bad time without some protections in place. As a reminder, here’s a diagram that recaps how I categorize where companies should make investments in order to maximize the scale and impact of their DevSecOps program:

Background

As stated in my previous post on Code Security, one of the first challenges I faced at Thermo Fisher Scientific was deciding between investing in mitigation(s) or static analysis. At the time I had no team, no real budget allocation (my boss was doing me a solid by finding budget for me), and hardly any connections in the Digital Engineering organization.

You see, Digital Engineering was the division responsible for Thermo Fisher Scientific’s flagship web properties. These two websites are effectively the Amazon.com of laboratory science purchases; you can buy everything from pipettes to personal protective equipment 🥼 and even bench top devices or microscopes 🔬. Needless to say, of the $24+ Billion dollars that Thermo Fisher pulled in that year, a large portion of the company’s revenue flowed through these two websites.

At the time, Digital Engineering had enabled a web application firewall (WAF) at the edge through their Boston-based Content Delivery Network (CDN) provider. Knowing how easy it was to bypass such protections from my experience as a former Top 100 Security Researcher on Bugcrowd, I decided it would be worth trying out some of the modern WAF technologies - and oh $DEITY did I learn some things 😅 But don’t worry, there’s a happy ending to this story that I’ll include in the Summary.

Modern Web Application Firewalls (WAFs)

The web looks nothing like it did two decades ago when the Dot Com boom (and bust) took place - which honestly is probably a good thing, but I digress. Between the advent of single page applications (SPAs), responsive layouts, template engines, microservice architectures, front-end and back-end APIs, advancements in SQL and NoSQL databases, and how easy it became to implement CA certificates to encrypt web traffic - well, legacy WAFs never stood a chance.

Below I’ve listed some of the newer technologies helping address mitigations for the many changes to modern application development that happened over the last two decades. I would say that most of these recommendations are applicable in a “connected application” context (web and mobile), but you might find that some recommendations can carry over into the desktop application space.

That being said, if you’re developing embedded software or desktop applications - my recommendation would be to invest heavily into Code Security and Dynamic Testing while skimming this post for additional perspectives.

Anyway, regardless of which technologies you adopt, one thing to point out here - you’ll probably be allowed just one line item in your budget for a Web Application Firewall. That usually means you’ll have enough money to invest in just one product, but you might be so lucky as to have enough budget for a few items from the list - or convince the business of the need for more than one solution.

Either way, do the right thing and choose a technology that will be easy for you to scale - and one that can provide useful data about your production environment beyond what Security might need. Your IT operations team(s) will thank you.

Runtime Application Security Protection (RASP)

This category was all-the-rage before people reverted to calling it a “Web Application Firewall”. That being said, the hype is real on this technology and it often has a positive impact on the security of your applications.

Generally speaking, RASP solutions can be integrated in one of two ways - an agent on the web application server, or as a library bundled into the code which makes API calls to an external service. Either way, the ⚡️ fast speed with which input from remote sources is validated against rule sets here is straight 🔥.

The benefits of integrating this technology are two fold - first, the rules are constantly being updated by vendors with little effort required from the IT Operations or Security team. Emerging threats are quickly mitigated without much work required by the team(s) responsible for maintaining the solution, meaning they can turn their focus to other business-critical tasks.

How to support this content: If you find this post useful, enjoyable, or influential (and have the coin to spare) - you can support the content I create via Patreon.️ Thank you to those who already support this blog! 😊 And now, back to that content you were enjoying! 🎉

Interactive Application Security Testing (IAST)

Similar to RASP technologies, IAST solutions took a similar approach with agent-based application monitoring 🕵️ . In many ways this technology can work similar to RASP in that it has the capability of monitoring inputs to validate if an exploit would occur when the source of that input can sink to an execution or storage point in a dangerous way.

The key difference between IAST and RASP (as far as I can tell) is that IAST can also be used to test where inputs might lead to exploitable vulnerabilities in an application. In this way the technology straddles the fence between Code Security and Mitigations - and in my experience doesn’t excel in either category. Heck, you might even say it fits into the Dynamic Testing category as well, given the testing capabilities.

If you’re implementing IAST at the Code Security layer, it’s probably too late to stop the code from moving to production - thus becoming dependent on the mitigation capabilities 🛡. If you use it at the mitigations layer, it can work in effectively the same way as a RASP solution - but management of the agents at scale can be difficult to maintain. RASP tends to win out over IAST in terms of performance and scaleability at this layer.

Likewise, if you’re using it in a Dynamic Application Security Testing (DAST) layer you’ll find that it feels altogether different than the traditional “black box” testing security professionals are used to when running DAST solutions. There’s definitely some benefits in giving IAST technologies to a development team, but you’re fooling yourself if you think they have enough time budgeted to set it up and use it effectively.

Distributed Denial-of-Service (DDoS)

Along with the advent of ransomware attacks over the last decade, and the parallel growth in eCommerce 💸 we saw a profound increase in threats made against company websites. As criminals gained access to large collections of compromised systems that were converted into bot networks, it became cheap for them to target websites with thousands (or millions) of requests.

This lead to criminals threatening companies with DDoS attacks unless ransoms were paid to keep the criminals away. It was like an electronic shakedown by “the mob”. Unfortunately, there is no guarantee that payment will stop criminals from coming back the following year for another shakedown 🦹. As a result, companies turned to Content Delivery Network (CDN) providers which make up the Internet’s backbone to mitigate for this.

Thankfully CDN providers have come a long way in this regard, and virtually every provider in this space can offer you protection against these kinds of attacks. If your company is already paying for content delivery, just make sure they’ve added in licensing for DDoS protection and call it a day. No agents to install, no libraries to pull into your code, and very few changes to your corporate DNS records required.

Bot Management

On the other side of the DDoS mitigation coin there are bad bots 🤖 which will crawl your site and scrape content for numerous (and sometimes nefarious) reasons. One example from my experienced at Thermo Fisher Scientific was competitors and 3rd party marketplaces scraping pricing data from our flagship web properties.

Likewise, intellectual property theft doesn’t end with stealing designs - it often turns into manufacturing “off-label” versions of the same product, and then selling that product in local (often times Asian) markets. In order to reduce friction in selling these “off-label” devices, such entities will look for product documentation to download and alter for appropriate branding with their “new product”.

The activities these bots perform can be disruptive in numerous ways - the least of which usually being service disruptions to your web properties. More serious (and often overlooked) impacts come from 🤖 activities skewing site metrics, leading to business decisions being made on products, features, or pricing from polluted data.

API Security

As the web has evolved over the last two decades, the use microservices and API design patterns / architectures has absolutely exploded💥. Unfortunately I think security organizations have a lot of catching up to do in this space. While there are a number of companies that will sell you a product to help solve API security challenges, it requires a good deal of maturity to do this well.

In a lot of ways, API security challenges stem from insecure design patterns and/or architectures that are hard to secure via tooling. If you’re going to tackle this problem in your organization, the best investment you can make will be in your security engineering team. Talented security architects can design and build templates and/or patterns for developers to use in their APIs, which will help solve this problem at scale.

Endpoint Protection (Application Allow-listing)

From a DevSecOps perspective, this technology will usually get pulled into the Vulnerability Management budget - but it’s worth mentioning here for awareness. If you can limit the footprint of what software your application server is able to run (either through containerization, or through endpoint protection) you can significantly limit post-exploitation impacts.

The way I generally recommend mitigating for this in modern application development is through containerization and microservice architectures. That being said, for older monolithic applications you’ll want to deploy some form of endpoint protection that can enforce application allow-listing in order to limit which software and processes the server is allowed to run.

TL;DR / Summary

Given the significant changes and advancements in software development over the last two decades, we have seen an exponential increase in the publicly accessible attack surface that companies have to mitigate for. As in every category of the DevSecOps Essentials, there are some technologies that stand out - where others might be considered a “jack of all trades, and master of none”.

For modern connected applications, Runtime Application Security Protection (RASP) - along with Distributed Denial of Service (DDoS) mitigation - stand out as primary investments worth considering when mitigating the most disruptive attacks.

On the other hand, if you’re in a small organization (like a startup) you might find Interactive Application Security Testing (IAST) solutions help you cover a few layers of the DevSecOps Essentials at a lower price point that will be easier to implement. But be warned: larger organizations are going to struggle maintaining IAST implementations at scale, and are probably better-off focusing on specialized tools.

That being said, larger organizations with mature DevSecOps programs should be adopting bot management and API security practices (if they haven’t already). At some point I’ll be writing a series on Security Maturity: Teams & Their Technologies where I’ll talk more about ways to measure your team’s maturity and deciding when to tackle challenges like those mentioned above.

Finally, if you are working on embedded software or desktop applications then my recommendation would be to invest heavily in Code Security and Dynamic Testing technologies. Your software will be the attack vector that criminals use to compromise an endpoint, so it will be worthwhile to harden the software as much as possible so that you avoid needing to tell your customers about critical software updates.

Oh, and that happy ending I mentioned earlier? Well, by the time I was able to push through procurement, Thermo Fisher ended up purchasing a Runtime Application Security Protection solution that scaled to cover over eighteen hundred (1800+) domains for the equivalent cost of the Boston-based, CDN-provided WAF (which covered just 6 domains). Moreover, we were able to get the solution launched in production using “full blocking mode” with just one person on my team collaborating with Digital Engineering 🚀 Talk about making an impact at scale!

Anyway, thanks again for stopping by! Stay tuned for my next post on Dynamic Application Security Testing, and in the mean time you can git checkout other (usually off-topic) content I’m reading over at Instapaper.

Until next time, remember to git commit && stay classy!

Cheers,

Keith // securingdev

If you found this post useful or interesting, I invite you to support my content through Patreon 😊 and thanks once again to those who already support this content!