DevSecOps Essentials: Dynamic Testing

TL;DR / Summary at the end of the post. The information shared in this series is the distilled knowledge gained from my experience building the DevSecOps program at Thermo Fisher Scientific (a global Fortune 100 laboratory sciences company).

Full Disclosure up-front: I am employed as a Code Scanning Architect at GitHub at the time of publishing this blog post.

A recap of the DevSecOps Essentials

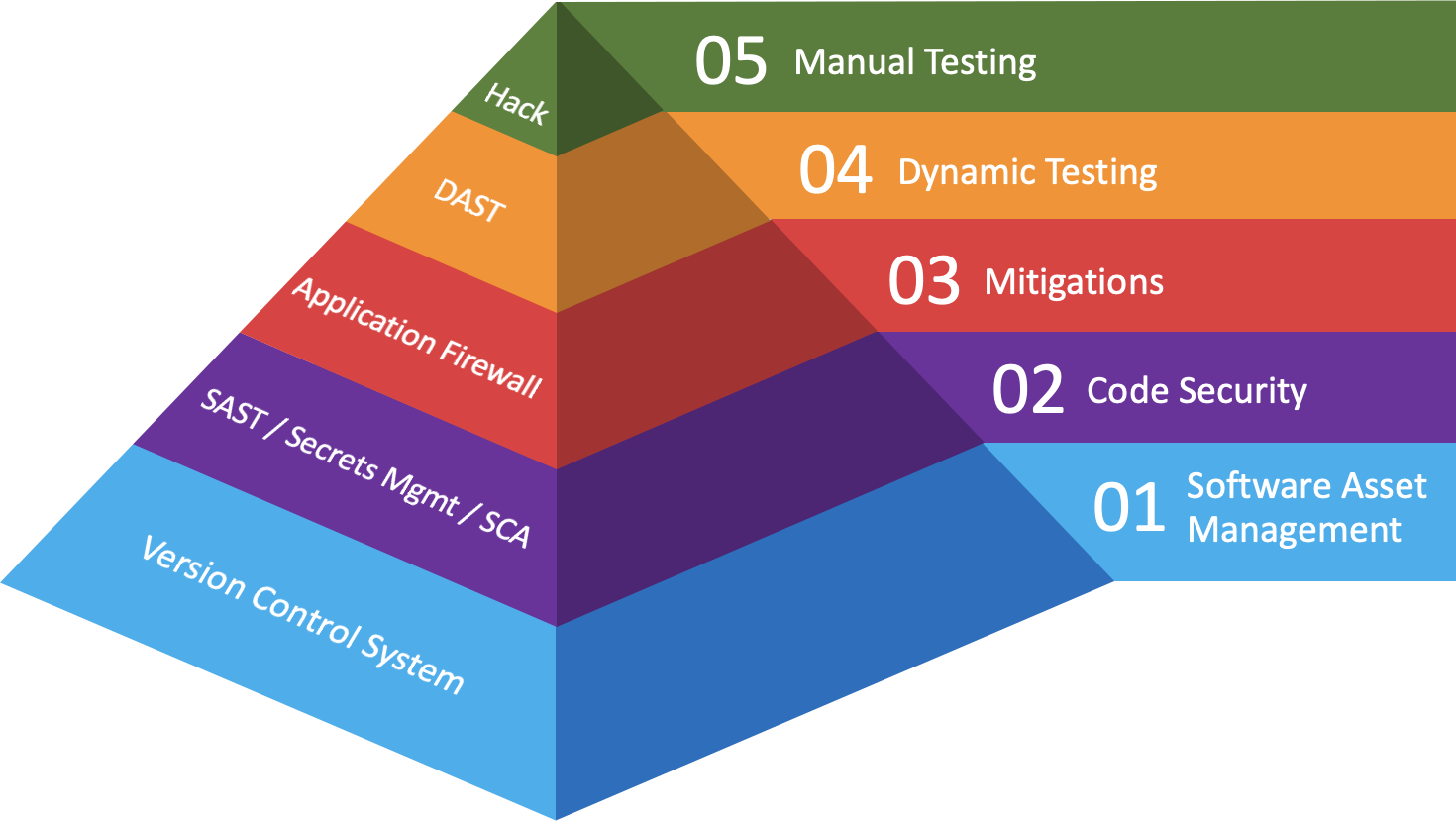

In the first post in this series I gave an overview of what I consider as the “DevSecOps Essentials”, in terms of both practices and their corresponding technologies. If you’ve done everything you can to address Software Asset Management, Code Security, and Mitigations for those vulnerabilities you weren’t able to fix, then it’s time you validate how resilient your application really is.

Now that your code is in production, it is all-but-certain that it is being attacked on a regular basis. Whether by automated dynamic testing or real hackers - you’re going to want the same perspective your adversaries have when it comes to the security of your application(s). Before we get started, below is the diagram that recaps how I categorize where companies should make investments in order to maximize the scale and impact of their DevSecOps program:

Background

When I arrived at Thermo Fisher Scientific, I had no team, no budget, and just two technologies to work with that were over a decade old. One was a binary static analysis tool that was never really implemented, and the other was a perpetually-licensed dynamic application security testing (DAST) solution that hadn’t downloaded new tests in years.

On top of this, my very first day on the job consisted of responding to an incident with a 3rd party supplier of marketing materials in Germany 🇩🇪. You see, the General Data Protection Regulation (GDPR) had just gone into effect a month prior and the supplier’s website had been hacked. The site’s database held contact information for Thermo Fisher employees (among other companies), and the criminals wanted us to pay a ransom - or they’d report the breach to German authorities.

We of course did not pay the ransom, but this whole incident lead to us informing the supplier that we wanted to perform dynamic and manual security testing of their application. Anyway, in the process of testing that week, I found a blind SQL injection attack in the same area of the code they had supposedly fixed - which lead us to manually placing orders with the supplier until they could fix the application.

Two years later the application still had a number of security flaws and, as far as we could tell, little-to-no security practices had been implemented in their software development lifecycle. As I like to say, Security is a Feature, and due to their lack of security we allowed the contract to expire. We wouldn’t have been able to make these business decisions if it weren’t for investments made in the ability to perform dynamic testing.

Dynamic Application Security Testing (DAST)

As I wrote about in my post on Mitigations, there have been a lot of the changes to software development over the last two decades; it has made life interesting with adaptations in the Dynamic Application Security Testing space as a result. While many of the technologies today are evolving around these changes in new and interesting ways, you’ll find that the categories for investments in this space have remained fairly static over time.

That being said, my warning to you throughout this post is that these technologies should only be used to validate the impact of the risk(s) you’ve accepted, and to confirm the resilience of the mitigations you’ve put in place. Which means you should be targeting pre-production applications both with and without mitigation(s) in order to get a clear picture of what will happen if your mitigation(s) fail. Oh and Bryan - don’t point the DAST solutions at the production flagship web properties 😉

Anyway, while the results should absolutely be shared with development teams to help prioritize risk remediation, expecting DAST to be a silver bullet for driving remediation is a fool’s errand. At the very least, if you haven’t implemented Code Security then you’re going to have a really hard time understanding the root cause of the vulnerabilities your DAST solutions are uncovering.

Web Application Scanning

Web App Scanners have come a long way over the last two decades. While many have had to overcome the hurdle of tracking “state” within an application - especially with the advent of Single Page Applications (SPAs) 🕸🕷 and JavaScript frameworks - the concept is still fairly straightforward. Point the scanner at your pre-production or staging site, give it credentials and/or a session token, and let it rip.

The volume of findings that come back will vary by how well your technology is performing validation for the vulnerabilities it’s presenting. Given the maturity of this technology, pay close attention to the ratio of false-positives vs. actionable findings you receive. Some newer technologies will even do validation for you, reducing false-positives substantially.

Either way, once you have results it’s worthwhile to cross-reference findings against those produced during the Code Scanning phase in order to derive more accurate risk scoring and remediation priorities. If you’ve done your remediations during Code Scanning and applied mitigations, then this is where you’re likely going to find vulnerabilities that get bundled in with Vulnerability Management practices.

Likewise this is often where engagement between Security and Operations teams start happening, as the findings will point out when the underlying web server is unpatched or out of support. Moreover, misconfigurations crop-up in ways that can lead to a business compromising event. Hopefully your mitigations and remediations will happen a lot faster at scale here by using Infrastructure-as-Code practices.

API Scanning

I discussed API Security in my post on Mitigations, which offers slightly different capabilities than what you get from API Scanning solutions. Although, sometimes these technologies will be combined to offer a robust solution for your APIs that cover as many as three of the DevSecOps Essentials: Code Security, Mitigations, and Dynamic Testing.

API Scanning solutions differ from API Security technologies in the sense that Scanners are used to find vulnerabilities often associated with the Open Web Application Security Project (OWASP) Top Ten vulnerabilities. As stated previously, some API Scanning solutions will also offer built-in mitigations and/or remediations for the vulnerabilities they uncover.

That being said, I firmly believe API Security starts with secure design patterns and resilient architecture planning. The best investment you can make when it comes to securing your APIs will be to hire talented security architects who can design and build templates for your software engineers to utilize. API Scanning technologies should then be used to validate the resilience of your architecture and design patterns.

Fuzzing

The proverbial “throw spaghetti at the wall and see if it sticks” 🍝 of dynamic testing, Fuzzing is the process of providing all sorts of malformed input to an application to observe how it might break. In many ways, this is done to ensure how resilient and performant your application is when hit with a lot of junk input.

All that being said, at Thermo Fisher we never went too far down the road with this technology because there were enough problems for us to solve at the Code Security layer that we needed to focus on. Likewise I’m only aware of a couple of free, open source technologies in this space that make performing this testing accessible.

If you’re an Entrepreneur or Venture Capitalist in this space and I’m missing something, please reach out! Otherwise, as far as I can tell this is an area of Information Security testing which has not been thoroughly pioneered as-of yet.

How to support this content: If you find this post useful, enjoyable, or influential (and have the coin to spare) - you can support the content I create via Patreon.️ Thank you to those who already support this blog! 😊 And now, back to that content you were enjoying! 🎉

Interactive Application Security Testing (IAST)

I’ve written at length about IAST in my posts on Code Security and Mitigations, so I’ll try to keep this brief by saying that you can perform dynamic testing with this solution.

Do I think it’s a good fit for dynamic testing? Not really. It exercises vulnerabilities in ways that should already be known from other tools in the Code Security layer, and performs testing in ways that feel sort-of obvious. I’d recommend using it as a decent mitigation technology before using it for testing - but if you’re a startup with a small budget and a few security people then it’s better than nothing.

A few words on Hybrid Scanners

While technically Dynamic Application Security Testing, I would categorize these as the tools which require human drivers 😎🤖 . These technologies are meant to augment a security researcher’s ability to map a target application and hint toward where vulnerabilities might be exploitable.

I’ll talk more to this category of tooling (and the advancements they’ve made) in my post on Manual Testing, but in the interim I think it’s worth noting that anything is better than nothing - and that there are plenty of open source technologies in this category which can be run in a build pipeline to perform dynamic testing.

The Future: Chaos Engineering

While I wouldn’t exactly categorize Chaos Engineering as DAST, I think the concepts of “Dynamic Testing” are applicable to what businesses are trying to accomplish in adopting this practice. In laymen terms, Chaos Engineering is “the discipline of experimenting on a system in order to build confidence in the system’s capability to withstand turbulent conditions in production”.

With regard to adoption, I think DevSecOps programs need a decent level of maturity to be able to do this well - especially at scale. That being said, while I’m hesitant to call this an “essential” practice for every company today, it is very much an essential practice for companies who have moved their applications to ☁️ “the cloud” ☁️.

That being said, there are a number of people I respect who are working in this space - including Kelly Shortridge, Aaron Rinehart, Casey Rosenthal, and especially my good friend James Wickett. Each of these folks are extremely bright and approachable - so If you’re looking for more information on where to get started with this practice, I’d start by reach out to them.

TL;DR / Summary

While the concepts of Dynamic Testing have remained fairly static over the last two decades - largely focused around testing for the Open Web Application Security Project (OWASP) Top Ten vulnerabilities - the ways in which these technologies have evolved has been interesting.

These days if you’re performing Web Application Security scanning, you should consider expanding the scope to include newer API Scanning technologies to help uncover the authentication, authorization, and data exposure flaws so commonly found with insecure APIs. Likewise, if you’re working on embedded or desktop application software then it would be worthwhile to explore the nascent field of security fuzzing technologies cropping up in the open source community.

That being said, for those of you working on “cloud native” applications, or at companies that are in the process of migrating to ☁️ “the cloud” ☁️, the next frontier of testing will include Chaos Engineering. Fault tolerance and resilient architectures will go a long way toward reducing business risk as companies expose more of their digital assets to the Internet.

No matter what technologies you adopt, I strongly advise against using Dynamic Testing in production environments. Doing so can cause unexpected customer impacts, and might lead to uncomfortable discussions with C-Level executives 😅. Spending the time and effort it takes to setup pre-production environments that include your mitigation stack will help you accurately assess how much risk your business has accepted when code makes it to production.

As always, thanks again for stopping by! Stay tuned for my next post on Manual Testing, and in the mean time you can git checkout other (usually off-topic) content I’m reading over at Instapaper.

Until next time, remember to git commit && stay classy!

Cheers,

Keith // securingdev

If you found this post useful or interesting, I invite you to support my content through Patreon 😊 and thanks once again to those who already support this content!😊