DevSecOps Essentials: Manual Testing

TL;DR / Summary at the end of the post. The information shared in this series is the distilled knowledge gained from my experience building the DevSecOps program at Thermo Fisher Scientific (a global Fortune 100 laboratory sciences company).

Full Disclosure up-front: I am employed as a Code Scanning Architect at GitHub at the time of publishing this blog post.

A recap of the DevSecOps Essentials

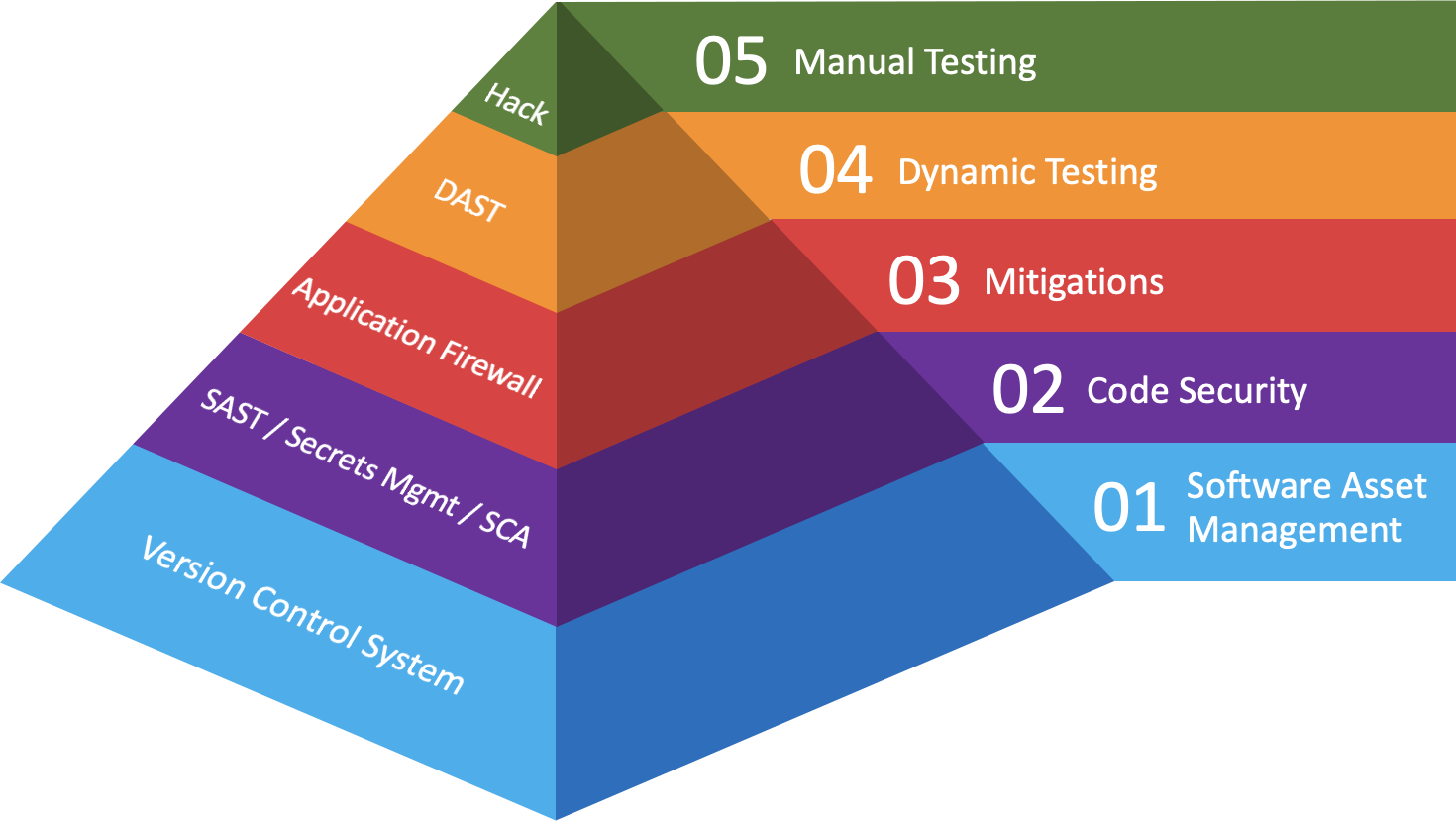

In the first post in this series I gave an overview of what I consider as the “DevSecOps Essentials”, in terms of both practices and their corresponding technologies.

If you’ve done everything you can to address Software Asset Management, Code Security, and Mitigations for those vulnerabilities you weren’t able to fix, then hopefully you’ve performed Dynamic Testing to validate the resilience of your applications - because 💩 is about to get real.

As the saying goes, you don’t have to outrun the bear 🐻 you just have to outrun the next person trying to outrun the bear. Anyway, with that amusing visual in mind, below is a diagram that recaps how I categorize where companies should make investments in order to maximize the scale and impact of their DevSecOps program:

Background

I vividly remember the first time Bryan and I had a conversation about Application Security. I had just finished delivering my training on Offensive Web Hacking at DerbyCon 7 and returned to the hotel room to hop on a call with Bryan and John at Thermo Fisher Scientific. You see, at the time I was working as a Trust & Security Engineer at Bugcrowd - and Thermo Fisher was looking at Bug Bounty programs.

Needless to say, Bryan and I hit it off and they decided to move forward with a tightly scoped, two-week engagement to test the company’s flagship web properties. Over the following couple of months I worked with Bryan’s team to get the program scoped and ready to launch. When launch-day came, we were mutually excited about it.

Without going into detail, I’ll just say this: it didn’t go well for them 🔥 It didn’t go well at-all.

At that time they hadn’t implemented any of the other DevSecOps Essentials in their Software Development Lifecycle. The only reason I can fathom why they purchased a Bug Bounty program was either due to regulatory requirements, or to kick-start their Application Security program.

Anyway, that lead to my next conversation with Bryan in February of 2018. I had launched the Application Security Weekly podcast a month prior with Paul Asadoorian, and Bryan gave me a lot of… interesting… feedback about the show 😅. We talked regularly after that - and in April of that year he gave it to me straight:

“Alright Keith - you talk a lot about this stuff on your podcast, and it’s time to see if you can actually do it. I need your resume, because I’m hiring a Manager of Application Security.”

Why bother with Manual Testing?

First of all, depending on the industry your company works in, you might be required by government regulation(s) to perform manual testing in order to validate the security of your company’s software. Many governments around the world require such testing as part of a compliance regime (🤮) that often coincides with some form of security certification.

Secondly, your security tools will miss something **in the process. There’s no substitute for a persistent adversary - whether that’s a 5 year old who wants to play more Roblox, or a 25 year making millions from ransomware payments. Logic flaws will crop up in your code in ways that lead to exploitable vulnerabilities. Those exploitable vulnerabilities could lead to a business compromise.

Trust me when I say that you want to know about these vulnerabilities before someone malicious does.

Bug Bounty Hunting

The world of Security Research changed profoundly the day that Katie Moussouris pushed Microsoft to pay researchers for their findings. Since then, her work - along with the work that Casey Ellis, Jason Haddix, and others have done has made this concept accessible to other companies.

In an ideal world, Bug Bounty Hunting is the process of performing security research within an approved scope against a target company. If you find something, you can report it through their bug bounty program and - if it’s not the dreaded “duplicate” finding - receive payment at a fixed rate based on the vulnerability’s severity. That finding then becomes a ticket in the development team’s backlog for prioritized remediation.

Unfortunately things don’t always work out this way, because companies are complex and security researchers are human beings. What happens when a company marks a vulnerability as “won’t fix” and stiffs the researcher on payment - only to fix the bug anyway? 😠 Likewise, what happens when a security researcher reports a critical finding on something that should have been out of scope? 😖

I don’t have great answers to either situation - but I will say this: as a company, if you treat security researchers with empathy and respect they will often reciprocate. Due to the bug bounty experience Thermo Fisher had, one of the first things I did was institute a Vulnerability Disclosure Program (VDP).

In that program we made it clear that we were interested in working with researchers who were motivated to help Thermo Fisher be more secure, but could not guarantee payment. We found that some researchers became very active on the program, were very polite and empathetic to the challenges we faced, and made no demands of us in our program. In turn, we paid those researchers 💰 because they consistently helped us improve our security.

My advice for security researchers would be to check your motives before performing research against a target. If you’re performing research with the explicit purpose of getting paid, then hunt on programs who have explicitly stated they will pay you for your work. “Beg Bounty” is real, and it creates a tremendous amount of noise for companies who already have a lot of problems to deal with.

On the other hand, if your motive is to sharpen your skills and help companies be more secure in the process, then you’re more likely to be treated with the respect and appreciation you deserve - often in the form of payment. One of the first people I hired was a hardware hacker who reported vulnerabilities in a Thermo Fisher device through coordinated disclosure.

How to support this content: If you find this post useful, enjoyable, or influential (and have the coin to spare) - you can support the content I create via Patreon.️ Thank you to those who already support this blog! 😊 And now, back to that content you were enjoying! 🎉

Application Penetration Testing (Pentesting)

The more traditional path for companies would be to perform regular pentests. These usually consist of tightly scoped engagements where a team of researchers will assess the security of an application from one of three perspectives:

- Black Box (no access to source code or other telemetry)

- Crystal Box (access to telemetry and/or back-end services)

- Security Review / Security Assessment (access to source code)

Regardless of the kind of test, the output usually comes in the form of a PDF report that is ingested by the security team, and then prioritized for remediation with project management and development organization. Tickets get created in backlogs, and (hopefully) findings get addressed in a timely fashion. 👍

That being said, many pentesters will tell you they often go back the following year and the same vulnerabilities are present. If your company is investing in regular pentests as a check-the-box exercise for regulatory or security certification requirements - and you aren’t fixing the findings - then you’re wasting your time, and doing an incredible disservice to your customers. 👎

As I’ve said before, Security is a Feature. Your customers will not ask you for it - they expect it. By failing to act accordingly, it’s only a matter of time before a security incident happens; the scale and impact of that incident is entirely up to you.

A few (more) words on Hybrid Scanners

I briefly mentioned Hybrid Scanners in my post on Dynamic Testing, and wanted to say a few more words on how these can add value to your DevSecOps Program. Which is to say that you should invest in licenses for such tools, and in the training your security team needs in order to use the tools effectively.

When a bug bounty gets reported or a pentest delivers their findings, you will inevitably want to reproduce the vulnerability. Part of the value in doing this is so that you can show leadership the potential impacts of the vulnerability being reported. The other part is so that you can validate how remediations or mitigations protect against exploitation. ⚔️

The field of Application Security / DevSecOps continues to grow and evolve as software development becomes increasingly complex; there is a ton of value to be had from investing in the training your people need to stay ahead of the curve. I’ll speak more to this in my final post in the series on Talent, but I’ll summarize by saying that training generates significant dividends.

TL;DR / Summary

In nature, predators evolve faster than prey because it’s required in order to survive. The same can be said about adversaries when it comes to software security. Once you’ve made investments in the other layers of the DevSecOps Essentials, it’s worthwhile to validate the resilience of your program with real adversaries whose services you’ve chosen for yourself.

That being said, when signing up for some form of manual testing it’s worth asking: can you handle continuous security testing, or is the development and security maturity of the company better suited for point-in-time assessments?

In my experience, continuous testing in the form of a Bug Bounty program is better suited for mature organizations that have the people, process, and technologies aligned to receive, validate, and respond to reports from security researchers. Thermo Fisher Scientific was not a mature organization when they initially signed up for a bug bounty program.

On the other hand, if you’re looking to build a DevSecOps program and have a limited budget - a cheap way to show how badly the company needs to make investments in this space would be to run a bug bounty program 🤷 security researchers will thank you for the easy money.

Alternatively, if you’re looking for validation of your security efforts but aren’t quite mature enough for continuous security testing then I’d recommend going with a traditional pentest. Some companies will offer these in annual, bi-annual, or quarterly packages that make for good snapshots of your progress.

Either way there’s no substitute for real adversaries, and it’s worth investing in manual testing in order to exercise the resilience of your DevSecOps program. Being able to replicate these findings internally with Hybrid Scanning tools and training for your team will pay dividends beyond the 3rd party manual testing you perform.

As always, thanks again for stopping by! Stay tuned for my final post in this series focusing on Talent, and in the mean time you can git checkout other (usually off-topic) content I’m reading over at Instapaper.

Until next time, remember to git commit && stay classy!

Cheers,

Keith // securingdev

If you found this post useful or interesting, I invite you to support my content through Patreon 😊 and thanks once again to those who already support this content!😊